HE Higher Education Ranking

Strategic Review, Growth Outlook, and Development Agenda

|

Purpose |

Evidence base |

Core question |

|

To provide a publication-ready long-form analysis of HE Higher Education Ranking for web use. |

Public website pages, IREG listing, and the HE 2026 questionnaire. |

How strong is the ranking today, what should be improved, and where could it realistically be by 2030? |

Report structure

|

Section |

Focus |

|

Executive Summary |

A concise view of what the ranking already does well, what it still needs, and why the next stage matters. |

|

Overview |

What HE Ranking is, how it is structured, and what makes its whole-institution logic distinctive. |

|

Questionnaire philosophy |

What the 2026 instrument reveals about the ranking’s deeper assumptions about quality. |

|

Prospects and positioning |

Why the ranking has room to grow and where it sits in the wider ranking landscape. |

|

Strengths and weaknesses |

What is working now, and where the ranking is most vulnerable to scepticism. |

|

Promotion and enhancement |

How to market the ranking more intelligently and how to strengthen it structurally. |

|

Growth outlook |

A scenario-based projection for the ranking’s likely participation trajectory through 2030. |

Executive Summary

HE Higher Education Ranking has moved, in only a few cycles, from being a young initiative into something more consequential: a framework that is beginning to occupy its own niche in the crowded and often predictable world of university rankings. That niche is not built on prestige theatre alone. It is built on a wider claim. The ranking presents itself as an institutional framework rather than a narrow research scoreboard, and its public materials repeatedly underline breadth, transparency, annual improvement, evidence-based participation, and relevance for universities, students, and policymakers. The 2026 questionnaire supports that claim. It stretches across research, teaching, internationalization, student success, governance, digital readiness, academic freedom, social media visibility, distance learning, sustainability, transparency, labour-market alignment, and even the university’s futuristic orientation. In plain terms, the ranking is trying to say something larger than “who is first?” It is trying to say “what kind of university are you becoming?”

That ambition matters. Many ranking systems talk as though universities are single-purpose machines whose value can be read almost entirely through citation counts, reputational halo, or selective internationalization metrics. HE Ranking is plainly resisting that habit. It evaluates the university as an operating organism with multiple organs, multiple pressures, multiple audiences, and more than one pathway to excellence. That gives it an unusual strategic advantage. It can speak to institutions that feel unseen by mainstream rankings: younger universities, private universities, applied institutions, regional institutions, and universities in emerging systems that may be doing serious work but not yet commanding bibliometric weight. There is a real market for that kind of framework, not because every institution wants flattering news, but because many institutions want to be measured in a way that resembles reality.

Still, a promising model and a trusted model are not the same thing. Publicly available materials already reveal a few tensions that cannot be ignored if the ranking wants to mature. Some pages emphasise 136 KPIs, while other public descriptions refer to 177 performance indicators. One methodology page states that the ranking is issued in March, but the same page also mentions publication in December. The website speaks confidently about evidence-first logic and constructive comparison, yet the public-facing explanation of audit, sampling, challenge procedures, exclusions, and post-publication corrections remains lighter than it should be for a data-collection-based ranking. None of this is fatal. Young rankings evolve. But the difference between a fast-growing initiative and a durable reference point is often found in these supposedly minor details. Trust is built in the margins.

This report takes the ranking seriously enough to be exacting with it. The aim is neither celebration for its own sake nor casual criticism. The aim is to read HE Ranking as a strategic higher-education project: to explain what it is, why it has traction, where its strengths really lie, where its vulnerabilities sit, how it could be promoted more effectively, how it could be strengthened structurally, and what its participation trajectory may look like through 2030. The resulting picture is encouraging. The ranking has a strong conceptual core, a clear developmental promise, and a believable growth story. Yet it now stands at a decisive threshold. The next stage of growth should not be driven by volume alone. It should be driven by methodological precision, disciplined communication, stronger public verification language, and a sharper articulation of what makes this ranking indispensable rather than merely available.

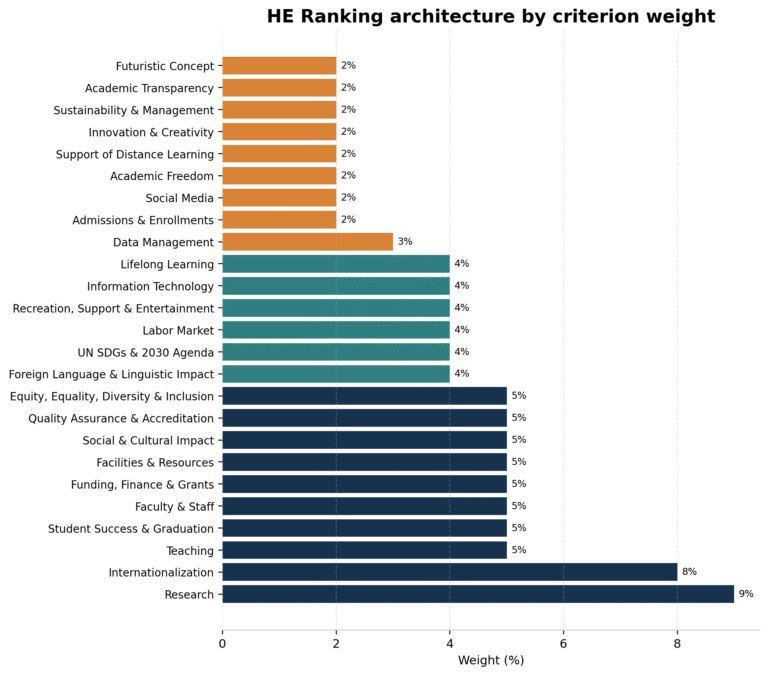

Figure 1. Public weight architecture of HE Ranking, based on the criterion distribution described in the public methodology record.

The chart shows a deliberately distributed framework: research and internationalization carry strong influence, but operational, governance, student-facing, and future-oriented dimensions remain visible rather than ornamental.

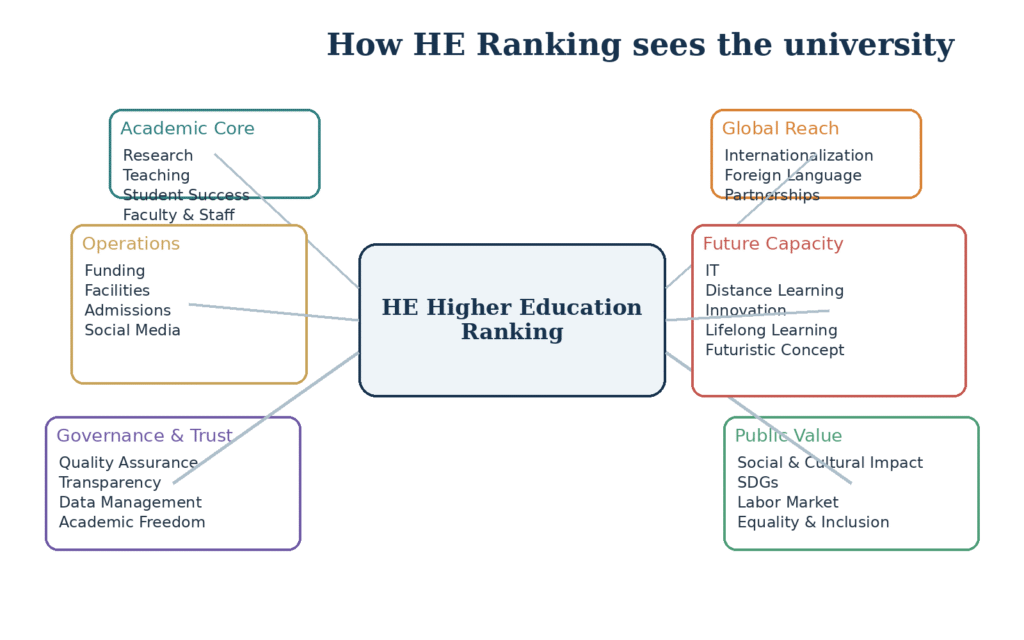

Figure 2. Conceptual reading of how HE Ranking organises the university as an institutional system.

This visual is interpretive rather than official. It illustrates the breadth of the ranking and why HE cannot be understood as a single-metric table.

To understand HE Higher Education Ranking properly, one has to start with what it is trying to measure. Publicly, the ranking describes itself as an institutional, not program-level, ranking. That distinction is not cosmetic. It means the object of assessment is the university as a whole—its systems, habits, outputs, policies, reach, and developmental posture—rather than the isolated performance of a single faculty, a single subject area, or a purely reputational signal. The overview page says the model relies on 25 criteria and 136 KPIs, while the methodology page simultaneously presents the same 25-criterion structure and, in another line, refers to 177 performance indicators. The questionnaire for the 2026 edition confirms the practical breadth of the framework regardless of which KPI count is treated as definitive. What emerges from these materials is unmistakable: HE is built to assess the whole university as a functioning institution rather than a narrow brand object.

That whole-institution logic is where the ranking begins to look distinctive. In the questionnaire, research is not reduced to one blunt metric. It is read through journals, publications, indexing patterns, citations, awards, patents, intellectual-property outputs, participation in joint research, and authored or edited books. Internationalization is not framed only as incoming student volume; it is extended to staff mobility, external professional experience, partnerships, international projects, and the academic background of young researchers. Teaching is not treated as a mystical black box either. The questionnaire asks about attendance patterns, licensing examination performance, executive education, micro-credentials, and industry alignment. Then the framework keeps going. It examines student success, staffing structure, finance, facilities, social and cultural activity, accreditation, equality, foreign-language delivery, SDG alignment, labour-market engagement, recreation, IT capacity, lifelong learning, data management, admissions behaviour, social media activity, academic freedom, online learning, innovation, governance, transparency, and future planning. It is difficult to accuse the ranking of looking at too little. If anything, the opposite question appears: can it keep so much breadth disciplined and intelligible?

A ranking like this is often best understood less as a scoreboard and more as an institutional map. Traditional rankings, especially global media-facing ones, tend to behave like telescopes: they look far, they admire visible brightness, and they privilege whatever can already be seen at a distance. HE is trying to act more like a survey instrument used on the ground. It asks: What internal capacities exist? How robust is governance? What does student participation look like? How widely is academic information disclosed? Is there real infrastructure behind digital learning? Are there signs of social engagement, sustainability, or future planning? That orientation changes the implied audience. The ranking is not talking only to applicants or headline writers. It is talking to vice chancellors, QA directors, institutional researchers, boards, ministries, and universities that want an external mirror with a developmental tilt.

That developmental tilt is not incidental language on the site. It is central to the brand. The project page says participating institutions receive diagnostic reporting. The methodology page says universities get criterion-level and indicator-level results after publication. The FAQ frames the methodology as a macro-enhancement tool rather than a narrow prestige contest. The questionnaire introduction does the same in more operational terms, presenting the exercise as a way to generate analytical insights that can support quality assurance and strategic planning. This is smart positioning. It allows HE Ranking to occupy a slightly different moral ground from rankings that present themselves as neutral truth machines while quietly behaving like fame indexes. HE, at least in its public self-description, is saying something softer but arguably more useful: comparison should serve improvement.

The weighting architecture reinforces this impression. Public material available through the IREG Observatory lists a deliberately diversified weighting model in which research carries the heaviest single share at 9%, internationalization follows at 8%, and a cluster of operational and academic domains carry meaningful but not overwhelming mid-level weights. A second layer of categories, such as language, SDGs, labour-market engagement, IT, recreation, and lifelong learning, receives moderate but visible weight. A final layer covers academic freedom, online learning, admissions, sustainability, transparency, futuristic planning, and related institutional conditions. There is a philosophical statement embedded in that distribution. The ranking is refusing the idea that one dimension should swallow the rest. It is also refusing the fiction that governance, transparency, or student-facing conditions are merely decorative around the “real” work of universities. In HE’s structure, institutional ecology matters.

This broad architecture has one especially important implication. It gives the ranking a potentially strong relationship with mission diversity. A teaching-led university, a regional applied institution, a young private university, or a university in a system still building its research infrastructure may be weakly represented in legacy rankings even when it performs capably in governance, industry alignment, multilingual education, social engagement, or operational quality. HE’s model makes room for those dimensions. That does not automatically guarantee fairness. Breadth can be mishandled. But it does widen the space in which different institutional identities can be recognized. In a sector that often pretends all universities are climbing the same mountain, that is no small conceptual intervention.

The website’s public rhetoric strengthens this inclusive posture. The home page speaks of fair comparisons and real decisions. The overview page frames the KPIs as evidence-first and auditable. Blog themes on the site engage with responsible ranking, global-south visibility, policy relevance, and partnership-building. All of that suggests the project is attempting to construct not only a methodology but also a discourse around what responsible ranking should look like. This is one reason the initiative has more promise than a simple league table might suggest. Rankings that endure do not survive on calculation alone. They survive because they tell a persuasive story about what counts in higher education and why their lens deserves attention. HE has started to build such a story.

And yet, the overview would be incomplete if it ignored the tension between conceptual ambition and documentary coherence. The site now presents a cleaner, more contemporary language than some earlier formulations, which is a welcome sign. Still, the coexistence of 136 KPIs, 137 indicators in some promotional language, and 177 performance indicators in the methodology record means the public face of the framework is not fully settled. For an institutionally ambitious ranking, this matters more than it may first appear. The ranking is asking universities to trust its structure, invest time in evidence submission, and then use its results strategically. In return, the public architecture must feel fully synchronised. The promise is big; the public documentation must be equally exact. That gap—manageable but visible—sits at the heart of the ranking’s current identity.

Even with that caveat, the basic conclusion is clear. HE Higher Education Ranking is not a derivative clone of the better-known global tables. It is attempting to build a more textured measure of institutional operation. Some observers will like that immediately; others will find it messy, ambitious, or even overextended. But no honest reading can call it empty. The questionnaire shows real effort. The website shows a maturing attempt to communicate purpose. The external IREG listing gives it a degree of visibility in the wider rankings conversation. The model is still young enough to be shaped, but old enough now to be taken seriously. That is where this analysis begins.

2A. What the 2026 Questionnaire Reveals About the Ranking’s Philosophy

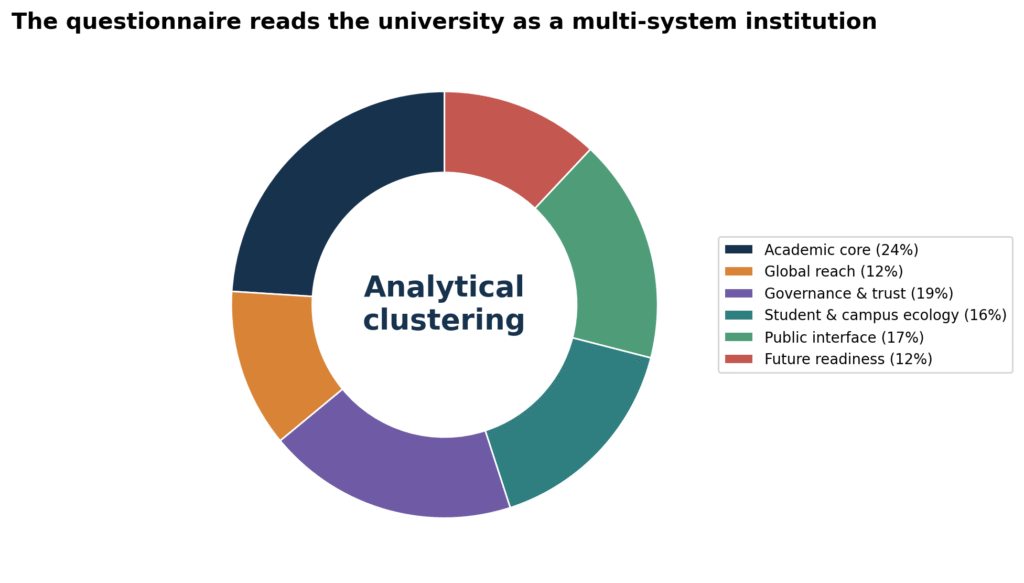

Figure 3. Analytical clustering of the questionnaire’s concerns into six institutional domains.

Questionnaires are revealing documents. They often expose a ranking’s true philosophy more clearly than a website slogan ever will, because questionnaires show where attention is being spent, what kinds of evidence are considered meaningful, and what assumptions the designers make about institutional quality. The 2026 HE questionnaire is unusually revealing in this regard. It does not simply gather a handful of headline figures and call the job done. It asks universities to present themselves as layered institutions with research capacity, teaching patterns, governance arrangements, digital practices, social presence, inclusion structures, international linkages, labour-market relations, and forward-looking commitments. The document reads, in effect, like a theory of what a university should be able to demonstrate if it wants to claim seriousness in the contemporary higher-education landscape.

One of the clearest things the questionnaire reveals is that the ranking does not believe quality is confined to outputs alone. In some ranking models, outputs dominate so strongly that every other domain becomes a footnote. In HE’s questionnaire, outputs matter, but they sit alongside policy, infrastructure, participation, disclosure, support, and institutional behaviour. A university is asked not only how many things it has produced, but also what systems it has built, what supports it offers, how it manages knowledge, how it relates to society, how visibly it communicates, and whether it is preparing for the future rather than merely surviving the present. That choice is philosophically significant. It places institutional capability at the centre of assessment.

The research section illustrates this nicely. It is not satisfied with raw publication volume. It asks about journals, indexed outputs, citations, awards, patents, joint research, staffing intensity, and authored or edited books. This suggests that the ranking sees research as an ecosystem rather than a single statistic. The internationalization section follows a similar pattern. It does not stop with international student numbers. It asks about academic mobility, international professional experience, partnerships, research projects, and the background of young researchers. The same logic appears in teaching, where attendance, licensing exams, executive programmes, professional development offerings, and industry alignment all appear. Each of these clusters implies that the ranking is trying to capture institutional depth rather than surface branding.

Another revealing feature is the prominence of governance-adjacent criteria. Quality assurance, accreditation, data management, academic transparency, sustainability and management, admissions patterns, and even the internal handling of politics, racism, and radicalism appear in the instrument. A more conventional ranking would likely leave many of these matters to internal audit or national regulators. HE pulls them into the ranking frame. That move can be debated, of course. Some will argue that rankings should stay closer to externally visible performance and farther from internal governance behaviour. Yet the very inclusion of these areas says something important about the project’s worldview: it sees the university as a civic and administrative institution, not only as a producer of degrees and papers.

The questionnaire also reveals a strong concern with legibility. How visible are strategies, policies, operational plans, and public disclosures? How frequently is the website updated? How active is the university across major platforms? How accessible is information to students and the wider public? These questions suggest that HE is interested not only in whether the university is doing things, but whether it can be seen doing them. This is an interesting and arguably contemporary stance. In a world where institutional credibility increasingly depends on digital transparency and public communication, opacity is no longer a neutral condition. The questionnaire quietly treats visibility as part of institutional responsibility.

There is another layer that deserves attention: the questionnaire’s moral imagination. It asks about equity, inclusion, disability accommodation, equal opportunities, academic freedom, intercultural learning, civic engagement, sustainability, and community participation. One could dismiss this as fashionable language if it were detached from the actual instrument, but it is not. These concerns are embedded in the scoring architecture. That means the ranking is not treating them as symbolic accessories. It is acknowledging that universities today are judged not only by their academic outputs but by the conditions of participation, belonging, openness, and responsibility they create. Whether every one of these matters can be measured with perfect fairness is open to debate. But the normative position is clear: universities are public institutions in a broad social sense, and the ranking intends to evaluate them accordingly.

At the same time, the questionnaire reveals some of the ranking’s burdens. Because the framework is so wide, it must constantly negotiate between measurability and meaning. Some items are easy to document numerically. Others are more interpretive. Some capture the existence of a policy; others aim, more ambitiously, to capture quality, intensity, or effectiveness. This creates a methodological challenge. A wide questionnaire can express an admirable philosophy and still struggle with calibration. The point here is not to criticise the existence of the broad instrument. It is to recognise that the very richness of the questionnaire increases the importance of technical exactness in scoring rules, evidence standards, and indicator wording.

The document also reveals a ranking that thinks in practical administrative categories rather than in abstract academic mythology. This is, frankly, refreshing. Universities often like to describe themselves using grand language—excellence, innovation, leadership, impact—without disclosing the machinery that makes those claims credible. The HE questionnaire does not let institutions stay at the level of slogan. It asks them about meetings, platforms, programmes, plans, activities, ratios, infrastructures, and documented practices. In other words, it pulls the conversation downward toward operations. That may not flatter every institution. It will, however, resonate with those who know that the difference between aspiration and performance is usually found in process.

There is one more thing the questionnaire makes visible: HE is trying to convert ranking participation into a form of structured institutional self-examination. Institutions filling out such a document are not only submitting data to an external body; they are also forced to take inventory of their own systems. Do they have the policy? Is it publicly accessible? Are the data actually retrievable? Is the online learning platform robust or merely nominal? Are labour-market meetings regular or sporadic? Is the future plan specific or decorative? These are questions that many universities should ask themselves whether or not a ranking requires it. In that sense, the questionnaire functions as a soft audit instrument as much as a ranking instrument. That hybrid role is one of the reasons the project has unusual potential.

Seen this way, the 2026 questionnaire is not just a technical annex to the ranking. It is one of the best windows into the ranking’s intellectual ambition. It reveals a framework that wants to measure universities as functioning, accountable, future-facing institutions embedded in social, academic, digital, and international environments. It also reveals why the ranking must now become stricter about methodological presentation. A questionnaire this ambitious deserves a public technical structure equally ambitious in clarity. Otherwise the instrument’s seriousness risks being partially hidden by the looseness of the public wrapper around it.

2. Prospects of the Ranking

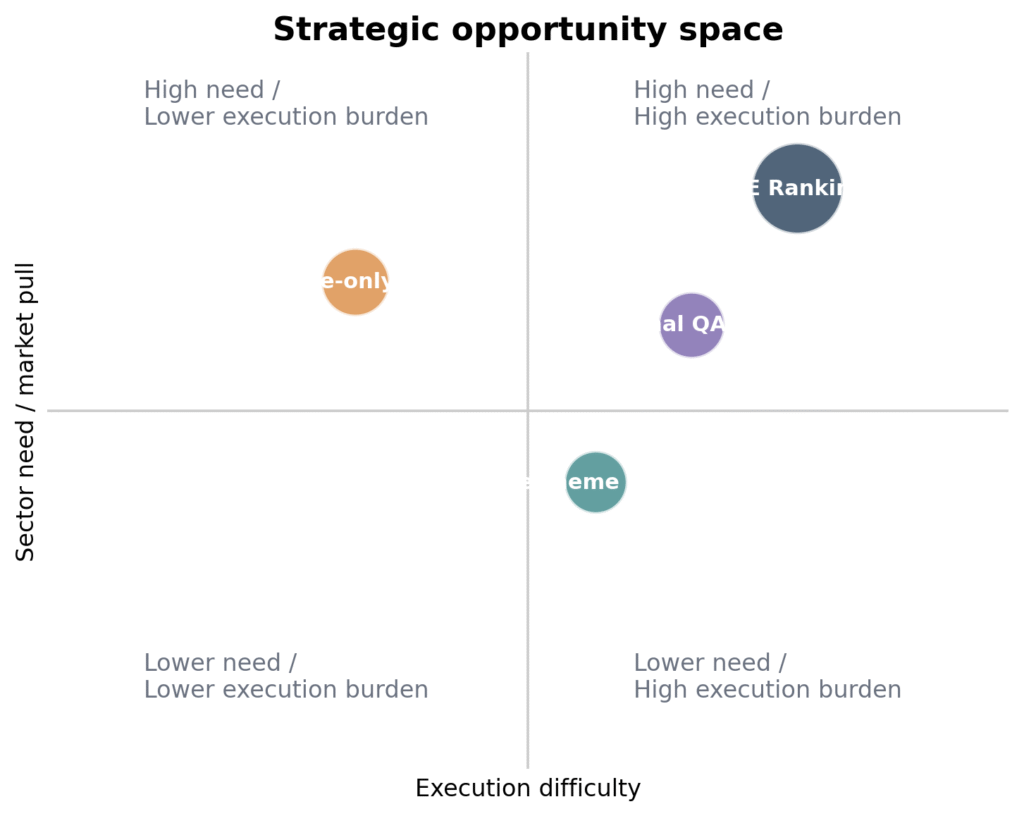

Figure 4. Opportunity space for HE Ranking relative to other forms of ranking or benchmarking.

The quadrant reflects an analytical judgement: the sectoral need for a broader institutional framework is high, but turning that need into trust requires unusually careful execution.

The prospects for HE Higher Education Ranking are, in broad terms, favourable. Not effortless, not guaranteed, but favourable. The reason is simple: the ranking sits at the intersection of several unmet needs in higher education. Universities want visibility, yes, but many also want recognition that is not chained to the prestige hierarchies of a small group of wealthy, research-saturated systems. Quality-assurance units want benchmarking tools that speak their language rather than merely delivering reputational theatre. Policymakers increasingly want instruments that help them see institutional operation in fuller terms—governance, inclusion, employability, digital readiness, evidence culture, partnerships, and social impact—not just publication output. HE is built close to those conversations. In that sense, its design is timely.

There is also a structural reason for optimism. Ranking fatigue is real. A great many universities have learned to live with mainstream rankings without actually believing they are well represented by them. Institutions that are mission-driven, regionally embedded, professionally oriented, recently established, multilingual, or still expanding their research systems often feel that the existing global tables catch them only in silhouette. HE offers a different proposition. It does not ask whether a university looks like a classic research giant. It asks whether the university is functioning credibly, developing broadly, and aligning its internal systems with a more expansive view of quality. That proposition is not only attractive; it is commercially and reputationally intelligent. It enlarges the pool of institutions that can see themselves in the framework.

The ranking’s prospects are strengthened further by the way it frames participation. The questionnaire and participation materials underline free participation and the provision of analytical reports. That matters. Free participation lowers the barrier to entry. A diagnostic report creates perceived value beyond mere listing. Together, they shift the ranking from a one-way reputational mechanism into a two-way institutional service. Universities are more likely to engage with a ranking repeatedly when they feel they are not only being judged but also being helped to interpret their own position. It is one thing to say, “You are number X.” It is another to say, “Here is the pattern behind your position, and here are the areas in which improvement could plausibly move the institution.” The second model has a stickier future.

The project also benefits from a public narrative that is reasonably well aligned with contemporary sector anxieties. The site speaks the language of transparency, mission diversity, fairness, evidence, responsible ranking, and constructive comparison. These are not fringe concerns. They are precisely the concerns that critics of rankings have raised for years. Now, one should be careful here. Borrowing the language of responsibility is not the same as fully satisfying the standard. Still, it is far easier to strengthen an existing responsible-ranking narrative than to invent one from scratch after the fact. HE has already positioned itself as a reformist rather than purely competitive ranking. That rhetorical base gives it room to grow into a more trusted actor if it chooses to do the hard methodological work.

Another encouraging sign is external visibility. The IREG Observatory entry does not certify methodological perfection, but it places the ranking within a recognized international information environment devoted to academic rankings. That matters symbolically and strategically. It signals that the ranking is not entirely invisible beyond its own website. For a project first published in 2023, that kind of visibility can become a bridge toward wider academic and policy recognition, especially if it is followed by stronger technical transparency and more systematic annual reporting. Put bluntly, the ranking already has enough public existence to be noticed; the next challenge is to become noticed for the right reasons.

The best growth prospects, in practical terms, are likely to come from four segments. The first is the Global South and emerging systems, where many institutions actively seek international visibility but resist being measured only through reputational inheritance or highly concentrated bibliometric power. The second is private and independent universities that want a broader account of institutional seriousness. The third is universities in reforming or volatile systems that need structured external frameworks to support internal modernization. The fourth is institutional leadership itself—rectors, QA centres, ranking offices, strategic planning units—because these actors are increasingly willing to use external metrics instrumentally, not reverentially. HE has a chance to become useful in that space because it talks in managerial and developmental language rather than only in winner-takes-all symbolism.

Still, the prospects should not be romanticised. Breadth alone does not guarantee legitimacy. In fact, the broader the framework, the higher the burden of proof. Universities and external observers will eventually ask a series of demanding questions. How exactly are self-reported claims checked? Which types of evidence are considered sufficient? How are ambiguous cases handled? What happens when a university overstates its performance, supplies weak documentation, or fails to disclose contradictory public information? Are all criteria equally auditable across contexts? How are cross-country differences normalised? What is done with institutions that are difficult to compare directly? These are not hostile questions; they are maturation questions. A ranking with serious aspirations will have to answer them in public and with precision.

Prospects, then, depend on a subtle balance. The ranking should continue to scale, because scale itself produces legitimacy signals: more countries, more participants, more institutional recognition, more conversation. But growth that outpaces technical credibility eventually becomes fragile. One can imagine two futures quite easily. In the first, HE continues expanding, improves its public technical documentation, tightens its verification language, publishes annual methodological notes, and becomes known as a rigorous alternative framework for broad institutional assessment. In the second, it grows numerically but remains vulnerable to scepticism because documentary inconsistencies and audit opacity persist. The same participation figures could exist in both futures; what would differ is interpretive authority.

On balance, the positive scenario is more plausible than the negative one. The site has evolved. The framing has become more coherent. The ranking’s thematic breadth is not accidental but clearly designed. The questionnaire shows that substantial thought has gone into what the ranking wishes to capture. Most importantly, the sectoral need is real. Universities are not suffering from too much meaningful benchmarking; they are suffering from too much shallow hierarchy and too little nuanced comparability. HE, if refined and disciplined, can speak to that problem with unusual force. Its prospects are good because the need it answers has not gone away. Quite the opposite: it is becoming sharper every year.

3A. Strategic Positioning in the Global Ranking Landscape

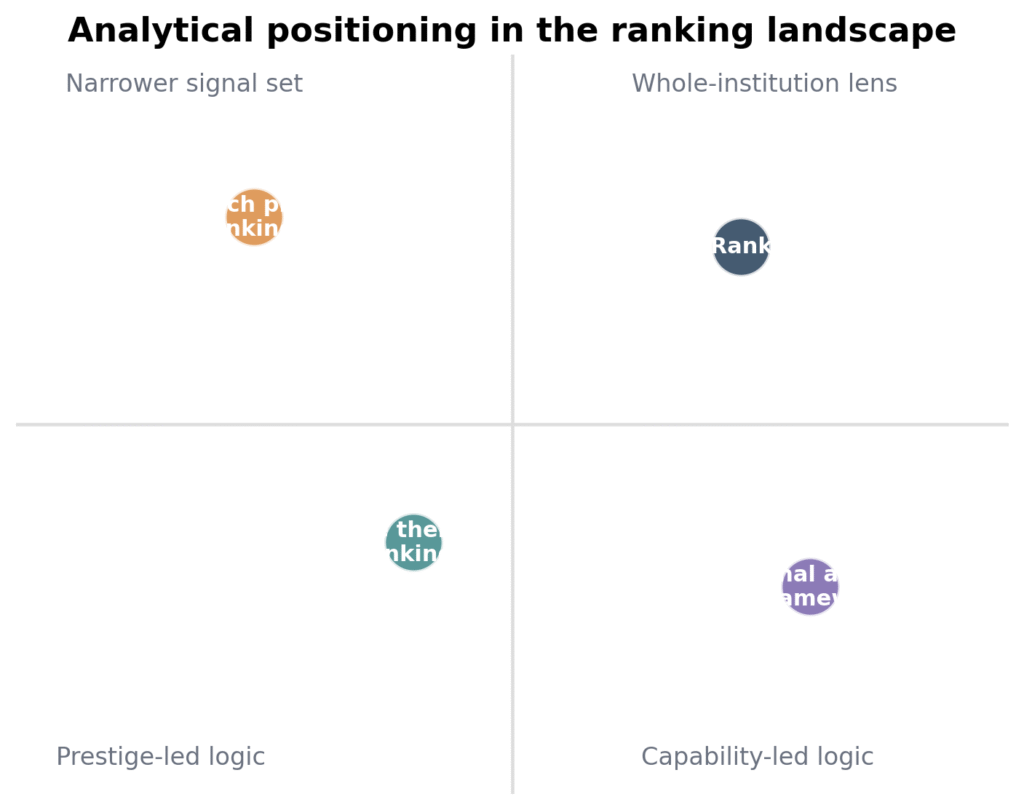

Figure 5. Analytical positioning of HE Ranking in the wider rankings landscape.

Placed within the wider ranking landscape, HE Higher Education Ranking occupies a space that is both crowded and oddly open. Crowded, because the higher-education world is saturated with rankings of one kind or another. Open, because many of those rankings still cluster around a relatively narrow idea of what counts. Research power, citation density, historic prestige, international visibility, and reputational echo remain dominant in much of the field. HE is interesting precisely because it does not begin from that same centre of gravity. Its ambition is wider, more institutional, and more managerial in the best sense of the word. That positioning could make it redundant if handled poorly. It could also make it genuinely distinctive if handled well.

There are, broadly speaking, three families of rankings in the current market. The first family prizes research intensity and reputational concentration. These rankings shape global headlines and institutional bragging rights, but they often reward universities that were already structurally advantaged long before the ranking existed. The second family focuses on narrower thematic domains—specific disciplines, employability, digital presence, sustainability, or innovation. These can be useful, but they often isolate one dimension from the rest of institutional life. The third family, into which HE is trying to move, aims to evaluate the university as a broad institutional system. This third family is smaller and harder to execute because it requires more data, more nuance, and more careful communication. Yet it is also the family with the greatest room for new entrants because the need for broader institutional benchmarking is increasing.

HE’s opportunity within that third family lies in its combination of inclusiveness and developmental logic. Many universities are already tired of being told, year after year, that excellence is essentially what the top of an established hierarchy looks like. That message is neither analytically sufficient nor strategically useful for much of the sector. A younger institution in a reforming national system may need a different kind of benchmark—one that notices whether governance has become more transparent, whether online infrastructure has improved, whether accreditation culture is deepening, whether industry linkages are becoming more serious, whether internationalization is moving beyond tokenism, and whether future planning is becoming operational rather than rhetorical. HE speaks more naturally to those concerns than the classic global prestige tables do.

This is why the ranking should resist the temptation to imitate larger global brands too closely. Young rankings sometimes believe that legitimacy will come from sounding like the oldest players in the room. Usually the opposite happens. They lose whatever distinctiveness they had and still fail to inherit the prestige of the incumbents. HE’s better strategy is differentiation through clarity. It should say, unapologetically, that it measures institutional capability more broadly than many mainstream rankings and that this is not a weakness but a considered choice. The ranking is not stronger when it pretends to be a smaller version of a citation-led table. It is stronger when it clarifies that it is evaluating something those tables only partially see.

That said, strategic positioning requires discipline about limits. HE should not promise that its framework captures everything that matters about a university. No ranking can do that. One of the most persuasive lines in the site’s responsible-ranking commentary is the acknowledgement that no framework can capture every nuance of institutional life. That spirit should be carried throughout the brand. The ranking’s distinctive claim is not total omniscience. It is broader institutional usefulness. That is a more believable and more sustainable identity.

Another important positioning advantage lies in language. HE can speak simultaneously to two worlds that often remain oddly separate: the world of public ranking visibility and the world of internal university management. Most rankings speak fluently to the first and weakly to the second. Internal audit tools speak fluently to the second and barely at all to the first. HE, because of its questionnaire design and its developmental reporting promise, can bridge those worlds. This bridge should become a core part of its strategic identity. A university should feel that by joining HE it is not only entering a ranking table but also entering a structured process of institutional reflection. That is a compelling proposition if communicated well.

Positioning also depends on tone. The strongest tone for HE is not combative superiority. It is disciplined seriousness. The ranking should sound aware of the limits of rankings as a genre while also being confident about the value of its own design. Too much defensiveness would make it sound insecure. Too much triumphalism would make it sound immature. The right tone is something like this: universities are complex, mainstream signals are often incomplete, institutions still need external reference points, and HE offers one of the more textured ways to think about institutional performance. That is sober, believable, and difficult to dismiss casually.

Finally, the global landscape rewards not only methodological distinctiveness but recognisable use cases. A ranking becomes memorable when people know what they would use it for. HE should therefore be positioned through clear use cases: benchmarking institutional maturity, strengthening strategic planning, informing QA conversations, supporting international visibility, demonstrating mission-consistent development, and surfacing capabilities beyond research prestige alone. Once those use cases become instinctive, the ranking will have moved from “another ranking exists” to “this is the ranking for that kind of problem.” That is the point at which positioning stops being promotional rhetoric and starts becoming market reality.

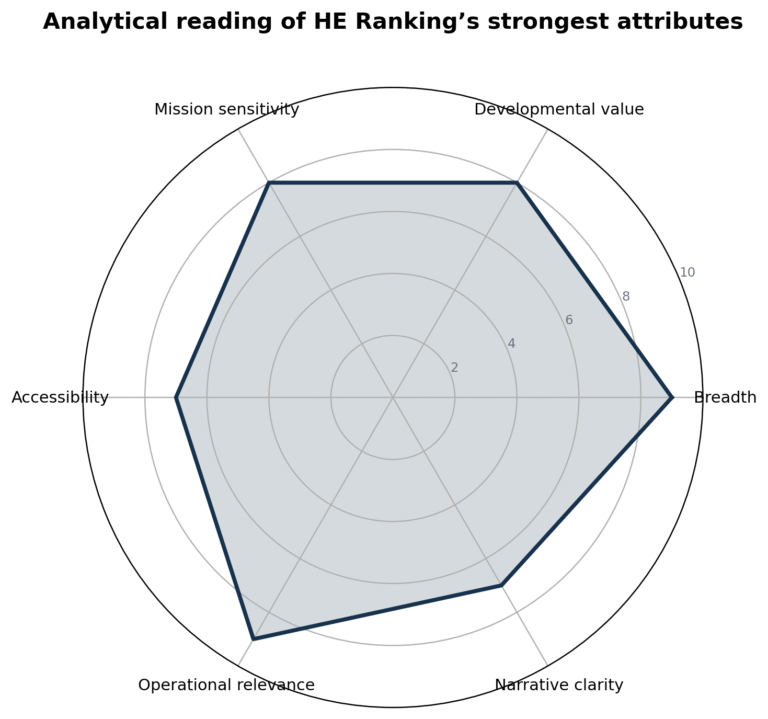

3. Strong Points

The first strong point of HE Higher Education Ranking is conceptual breadth anchored in institutional reality. That phrase can sound abstract, but the questionnaire makes it concrete. Many rankings are broad only in marketing copy and narrow in practice. HE appears to reverse that pattern. The practical instrument reaches into research, teaching, staffing, governance, finance, infrastructure, internationalization, labour-market engagement, student outcomes, social visibility, academic freedom, technological preparedness, and future planning. This is not breadth for decoration; it is breadth that resembles the actual agenda of university management. When university leaders sit around a table to discuss institutional progress, these are the kinds of domains they talk about. That alone gives the ranking a kind of operational credibility that more glamour-driven models often lack.

A second strong point is the ranking’s developmental philosophy. The public materials do not frame the exercise as a punishment mechanism or as a ritual of prestige confirmation. They repeatedly describe it as useful for planning, enhancement, and constructive benchmarking. This matters because rankings shape behaviour. A ranking that rewards only visibility may encourage superficial signalling. A ranking that makes room for planning, governance, evidence culture, and service provision can, at least in principle, encourage institutions to strengthen internal systems rather than only polish external narratives. That is a quiet but substantial advantage. It means the ranking can position itself as part reputational instrument, part improvement architecture.

Third, the ranking has a persuasive inclusiveness. The website’s language around fair comparisons, mission diversity, and emerging systems is not ornamental. It is supported by the architecture of the questionnaire. A university that lacks elite-scale publication volume can still demonstrate strength in multilingual provision, digital support, quality assurance, partnerships, social engagement, student services, or governance. A university in an emerging system can still show disciplined planning, accreditation culture, community connection, and future orientation. In a sector where international visibility often mirrors historical concentration of resources, this wider space of recognisable competence is one of the ranking’s most attractive features. It does not erase inequality, but it softens the tyranny of one-dimensional comparison.

Fourth, the weighting pattern is more balanced than many observers would expect. Research matters, and it should. But research is not allowed to behave like an empire that colonizes every other domain. Internationalization has meaningful presence. So do teaching, student success, staffing, quality assurance, finance, facilities, and social or cultural impact. Even categories that are often ignored elsewhere—academic transparency, distance learning, data management, futuristic planning—are not dismissed as marginal ornaments. They are assigned weight, modest perhaps, but real. That sends a signal about the kind of institutional life the ranking values. It is saying, in effect, that quality is distributed. It lives in systems, not merely in citation graphs.

Fifth, the ranking is relatively accessible in communication terms. Its website is open, its participation model is visible, and its framing is understandable even to non-specialists. Some ranking systems cultivate opacity as though mystery were evidence of sophistication. HE leans the other way. The overview and FAQ pages attempt to explain what is being measured and why. The home page speaks in an applied register about fair comparisons and decision-making. This communicative clarity is not a minor asset. Rankings do not circulate only among data specialists. They move through universities, ministries, media outlets, student communities, and partnership conversations. A framework that can explain itself without collapsing into jargon has an easier path to adoption.

Sixth, the project has early narrative coherence. By that, one does not mean total coherence in every technical detail; there are visible inconsistencies, and those will be addressed elsewhere in this report. The point here is different. The ranking already knows what sort of story it wants to tell about itself. It wants to be seen as independent, open, constructive, evidence-aware, globally inclusive, and improvement-oriented. It has blog posts and explanatory pages that reinforce those themes. That matters because rankings are not only measurement systems; they are public narratives about value. HE is not drifting aimlessly in that regard. It has a discernible identity.

Seventh, the 2026 questionnaire itself suggests institutional seriousness. There is no easy way to assemble such a framework if one has not spent considerable time thinking about university operations. One can certainly criticise elements of emphasis, wording, or calibration. Yet the instrument does not read like a superficial form assembled from clichés. It reads like a document shaped by close attention to how universities function and how administrators classify evidence. In reputational terms, this matters. Sophisticated users notice when an instrument has been designed by people who actually understand institutional machinery.

Finally, there is a subtler strength that may matter most over time: HE Ranking is trying to connect ranking culture to improvement culture. That is not easy. Rankings attract attention because they simplify. Improvement requires detail because it complicates. HE is trying to keep both impulses in play. It wants visibility, but it also wants granularity. It wants comparability, but it also wants mission sensitivity. It wants public recognition, but it also wants institutional feedback. Tensions remain, unquestionably. Yet the attempt itself is strategically intelligent. If the ranking can keep that dual character without becoming muddled—publicly legible and internally useful—it will possess a competitive advantage that many louder rankings do not.