There is a difference between a ranking that merely classifies institutions and a ranking that helps institutions understand themselves. That distinction matters more than many people admit. In higher education, numbers travel fast, screenshots circulate quickly, and tables are often shared before the underlying story is even read. Yet universities are not built only out of prestige signals. They are built out of systems, documentation, evidence, leadership choices, staff commitment, student experience, public trust, and the discipline of continuous improvement. That is why the 2026 edition of HE Higher Education Ranking deserves to be read as more than a scoreboard. It is a performance landscape that brings together 507 institutions from 119 countries and looks at universities through a broader, more balanced lens.

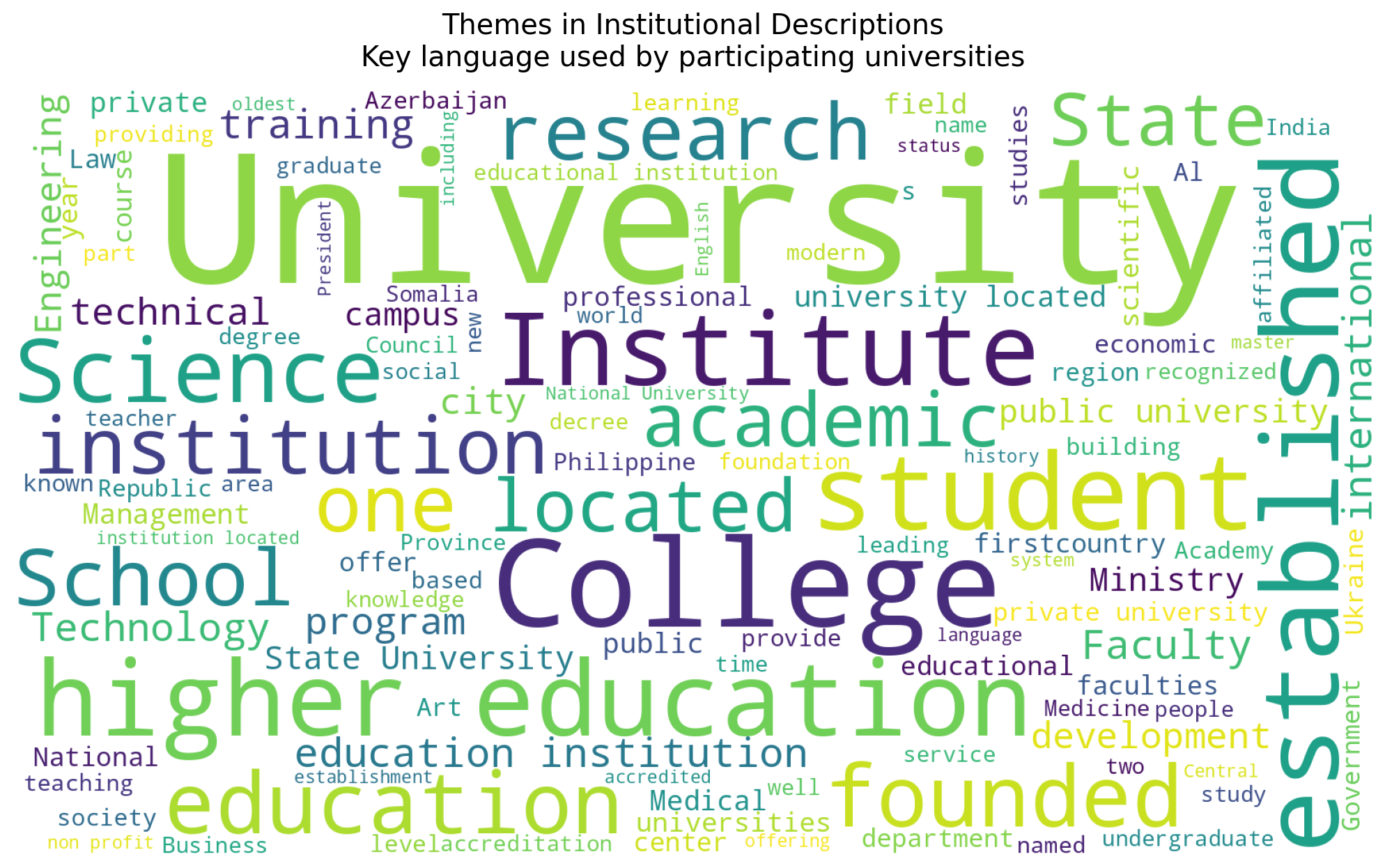

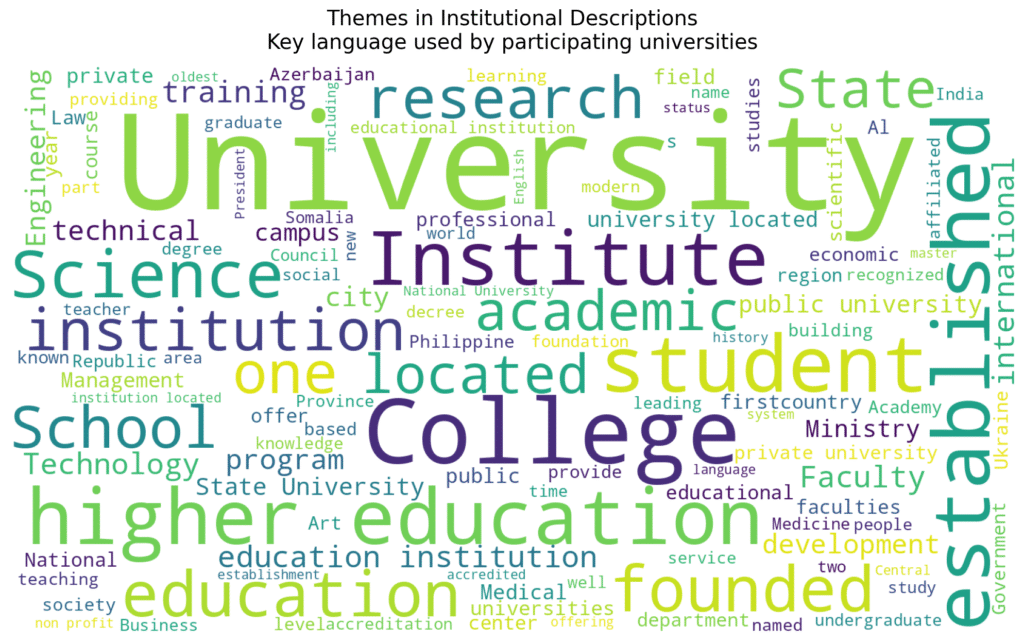

What makes that important is not simply the scale of participation, although the scale is significant. A ranking that gathers universities across so many systems, languages, and institutional models tells us something important about the present moment in global higher education: institutions want to be seen, but they also want to be understood fairly. Many universities today are exhausted by narrow frameworks that reduce their identity to one or two outputs. A university may be strong in governance and student support yet modest in research volume. Another may be digitally visible, internationally connected, and highly responsive to change, even if it does not belong to a historically dominant academic ecosystem. A serious ranking in 2026 cannot afford to ignore that complexity.

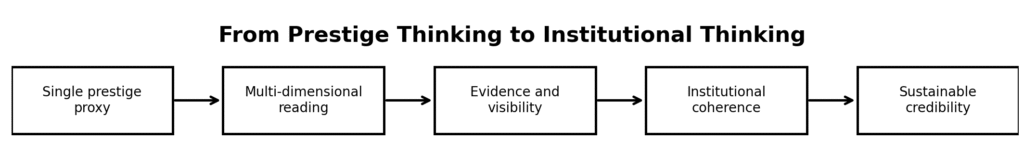

The most valuable message from this year’s edition is that visibility and depth do not need to be enemies. A university can seek recognition while still taking quality assurance seriously. It can participate in a ranking while also using the results as an internal mirror. That is precisely how institutional maturity is built. The strongest universities do not fear measurement. They fear shallow measurement. They do not resist comparison. They resist comparison that strips away context, effort, and developmental trajectory. When a ranking framework uses multiple dimensions rather than a single academic proxy, the result is not perfection, but it is certainly a more useful conversation.

The 2026 results also remind us that global higher education is no longer a conversation owned by a small club of well-known institutions. The participating universities come from regions that are often underrepresented in dominant narratives, yet they are clearly investing in systems, evidence, outreach, academic identity, and institutional positioning. That matters because the future of higher education will not be shaped only in a handful of already-famous cities. It will also be shaped in ambitious universities in Central Asia, the Middle East, South Asia, Africa, Eastern Europe, and emerging academic hubs that are quietly building capacity with impressive seriousness.

This is one reason I believe the most intelligent way to read a ranking result is not to ask only, “What is our position?” but also, “What is this result revealing about our institutional character?” If a university scored well overall, it should ask what internal habits made that possible. If it landed in the middle, it should identify where consistency is missing. If it ranked lower than expected, it should not rush into defensiveness; it should begin by investigating evidence quality, communication gaps, weak documentation, and uneven strategic execution. Rankings become dangerous when they are treated as vanity instruments. They become powerful when they are treated as institutional diagnostics.

Another encouraging feature of the 2026 edition is that it strengthens the argument for evidence culture. In many systems, universities do meaningful work every day but fail to present it coherently. The issue is not always underperformance. Sometimes it is under-documentation. Sometimes it is fragmented reporting. Sometimes it is the absence of a disciplined institutional narrative. One office knows the achievements, another holds the data, another manages the website, and another coordinates external communication, but no one turns these pieces into a clear picture. Rankings often expose exactly that kind of fragmentation. In that sense, participation is already productive, because it forces universities to ask whether their internal systems are genuinely integrated.

For leaders, this is where the real value begins. A ranking result should lead to better conversations across rectorates, quality units, international offices, media teams, IT departments, and academic councils. It should provoke questions about governance, transparency, benchmarking, and self-study. It should help institutions move from reactive reporting to strategic positioning. Not because rankings are the whole mission of a university, but because they often reveal how seriously a university takes the task of making its mission visible and measurable.

The 2026 edition also carries a broader symbolic value. It signals that institutions are looking for frameworks that acknowledge diversity without abandoning rigor. A university in Uzbekistan, Iraq, Romania, Pakistan, Egypt, Jordan, or Somalia should not have to mimic a single global template in order to be recognized. It should be able to demonstrate quality through a multidimensional approach that respects institutional context while preserving standards. That principle is not anti-excellence. On the contrary, it is a more demanding form of excellence because it asks institutions to be strong across a wider set of responsibilities.

My strongest takeaway from this year’s ranking is therefore simple: the most meaningful rankings are not the loudest; they are the most useful. They help institutions see where they are convincing, where they are inconsistent, where they are invisible, and where they are ready to rise. That is why HE Higher Education Ranking 2026 should not be read as a static list. It should be read as a living map of institutional performance, ambition, and readiness. For universities willing to learn from it, that is far more valuable than a number alone.

#HigherEducation #HERanking #UniversityRankings #QualityAssurance #AcademicLeadership #InstitutionalImprovement #HigherEdStrategy