A ranking result arrives. The certificate is shared. A post goes live. Colleagues congratulate one another. Then what? This is the moment when many institutions lose momentum. They celebrate the outcome or worry about the position, but they do not convert the result into a disciplined improvement cycle. That is a missed opportunity. A ranking becomes truly useful only when it leads to structured follow-up.

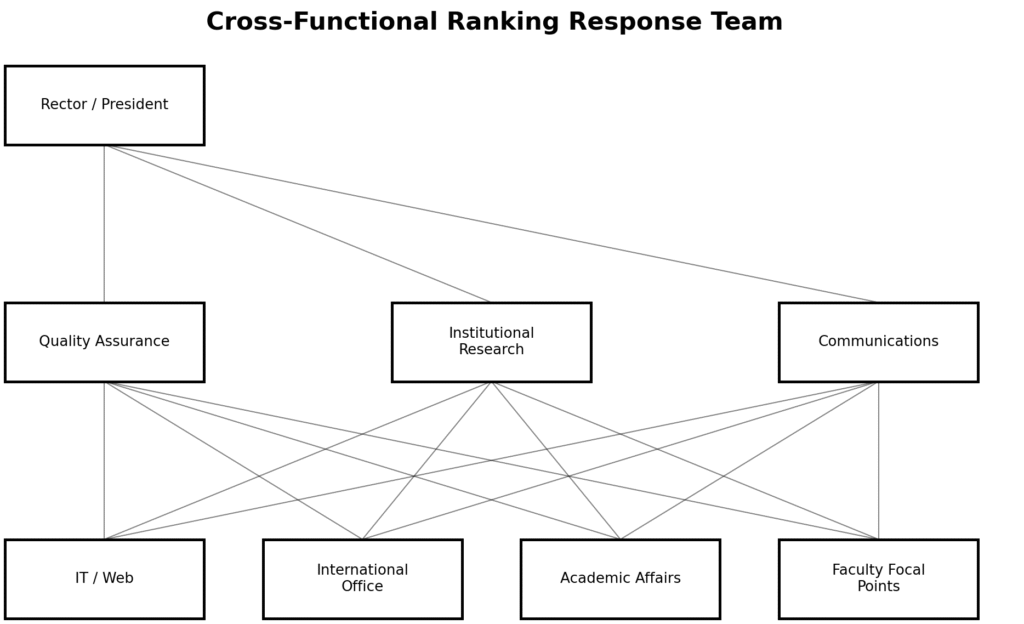

The first step is interpretation. Not emotional interpretation, but analytical interpretation. Leaders should gather the relevant units—quality assurance, institutional research, communications, international office, IT, academic affairs—and read the result carefully. What does it suggest about the institution’s current public profile? Where does it confirm strengths? Where does it hint at underexposed work, uneven systems, or missing evidence? A ranking should open a conversation, not end one.

The second step is diagnosis. Universities should identify the gaps between reality and visibility. Are there achievements that remain publicly invisible? Are important documents outdated or scattered? Are websites inconsistent across faculties? Are strategic priorities not translated into accessible evidence? Often, institutions discover that part of the issue is not the absence of good work but the absence of integrated presentation.

The third step is ownership. Improvement does not happen when everyone agrees vaguely that “we should do better.” It happens when responsibilities are assigned. Someone must lead website architecture. Someone must coordinate evidence collection. Someone must review public documentation. Someone must connect academic units to central communication. Without ownership, the result turns into background noise.

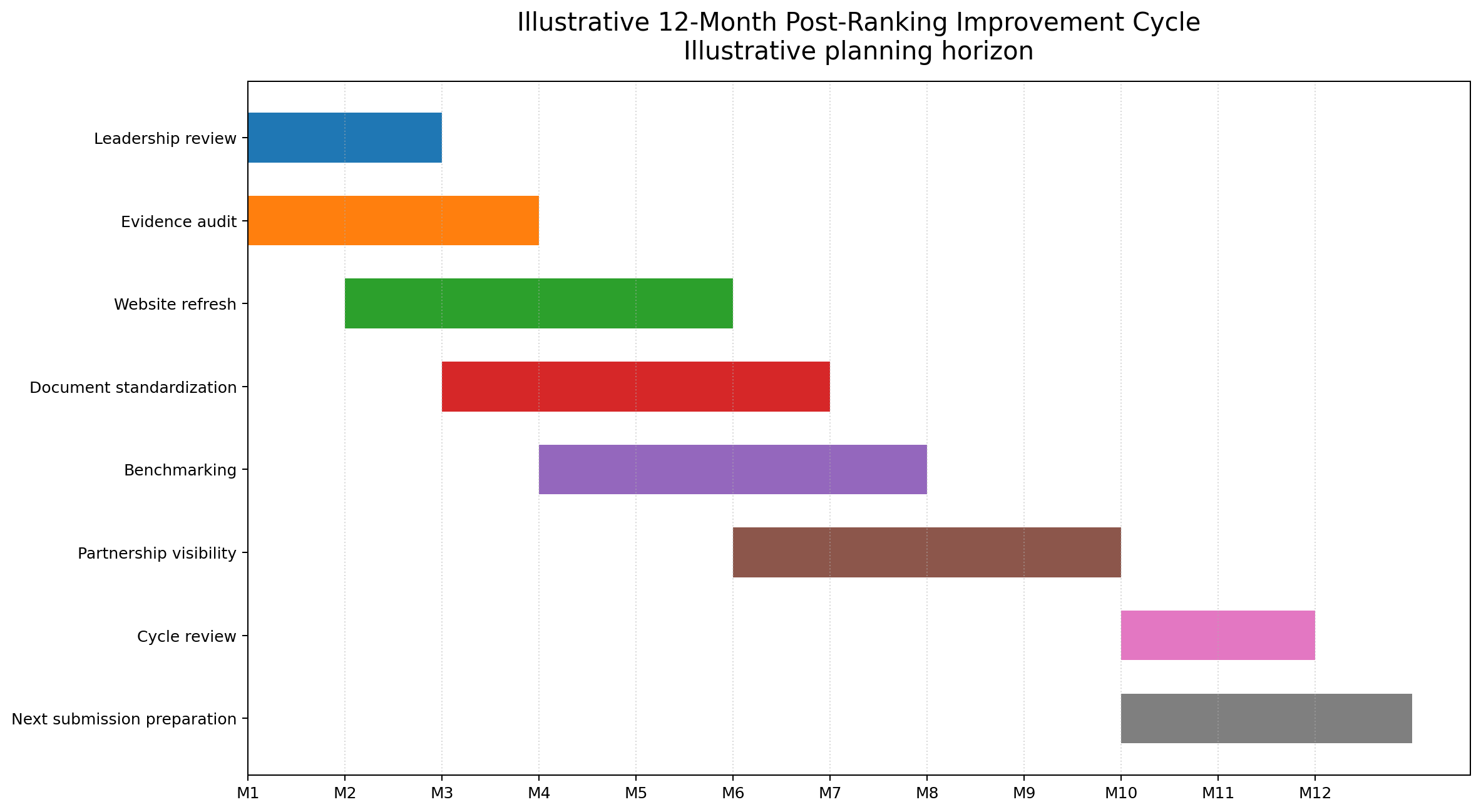

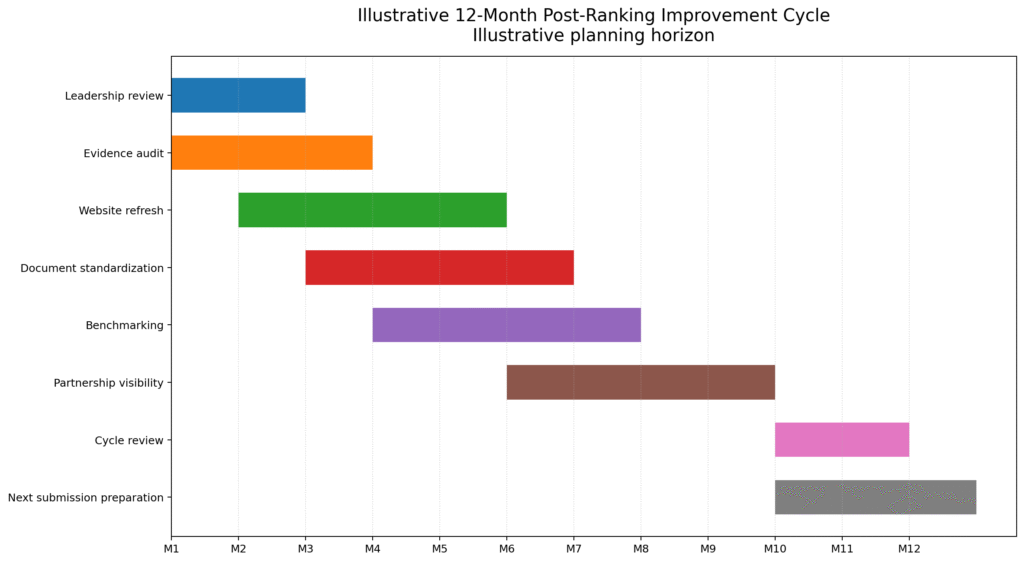

The fourth step is rhythm. Universities should turn the ranking response into a 12-month cycle with milestones, not a burst of activity before the next edition. Quarterly reviews, evidence updates, web audits, communication improvements, and periodic benchmarking can make a dramatic difference. Institutions rise when they replace episodic effort with routine discipline.

The fifth step is narrative. A university should learn how to speak about its result in a way that is confident but intelligent. Not self-congratulatory, not defensive, but thoughtful. The best public message is one that celebrates progress while also affirming commitment to further improvement. That tone builds credibility with stakeholders.

In short, the next step after receiving the result is not merely to announce it. The next step is to organize around it. Rankings are most valuable when they become catalysts for institutional learning. If a university understands that, every result—whether high, middling, or disappointing—can become useful.

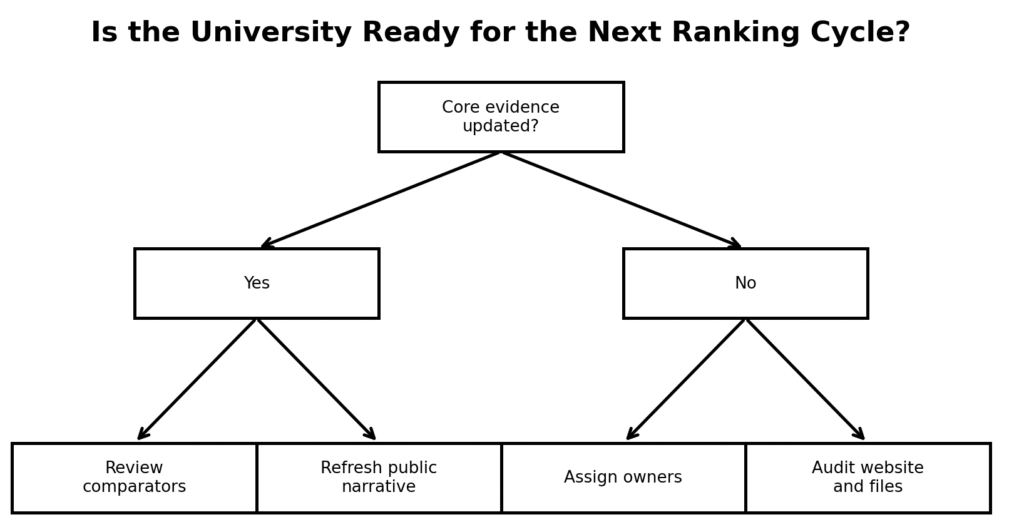

A sixth step is learning loops. Once actions are taken, the university should review whether those actions are actually improving external readability and internal coordination. This is where many institutions still struggle. They implement changes but do not check whether the changes are producing institutional clarity. Simple review loops—monthly evidence checks, faculty web audits, strategic communications reviews—can make the follow-up process far more effective.

A seventh step is benchmark selection. Universities should choose a few comparable institutions above them in the table and study them carefully. Not obsessively, and not imitatively, but intelligently. What kinds of public evidence do those institutions make visible? How coherent is their digital architecture? How do they communicate achievements? Which patterns are transferable? Realistic benchmarking is one of the most efficient tools available after a ranking release.

And finally, leaders should remember that constructive follow-up builds trust. Staff become more engaged when they see that external results are used thoughtfully rather than theatrically. A university that handles rankings with maturity sends a message internally: we are serious about improvement, and we know how to turn external feedback into institutional learning.

Handled this way, a ranking result becomes part of institutional governance. It joins accreditation, internal review, and strategic planning as a source of structured reflection. That is where the real value lies. The university stops asking only how it was seen and starts asking how it can become more coherent, more visible, and more convincing in the cycle ahead.

This is also why post-result leadership tone is so important. If leaders respond only with celebration or disappointment, they encourage a shallow institutional culture. But if they respond with curiosity, structure, and a clear plan, they teach the university how to use external judgment well. That is a mark of academic maturity.

A ranking result should therefore be the beginning of a disciplined conversation about coherence. It should push the institution to ask not only how it is viewed, but how effectively it is organizing the things it most wants the world to see.

#HigherEducation #HERanking #UniversityLeadership #InstitutionalImprovement #QualityAssurance #AcademicManagement #HigherEdStrategy