One of the most persistent problems in global higher education is the tendency to treat the university as if it were reducible to one function. In many ranking conversations, that one function is research. Research matters profoundly, and no serious person should argue otherwise. It advances knowledge, shapes policy, builds prestige, and strengthens academic reputation. But a university is never only a research machine. It is also a governance structure, a teaching environment, a public institution, a digital entity, an employer, a community actor, and a system of evidence. The 2026 edition of HE Higher Education Ranking is valuable precisely because it pushes back against reductionism.

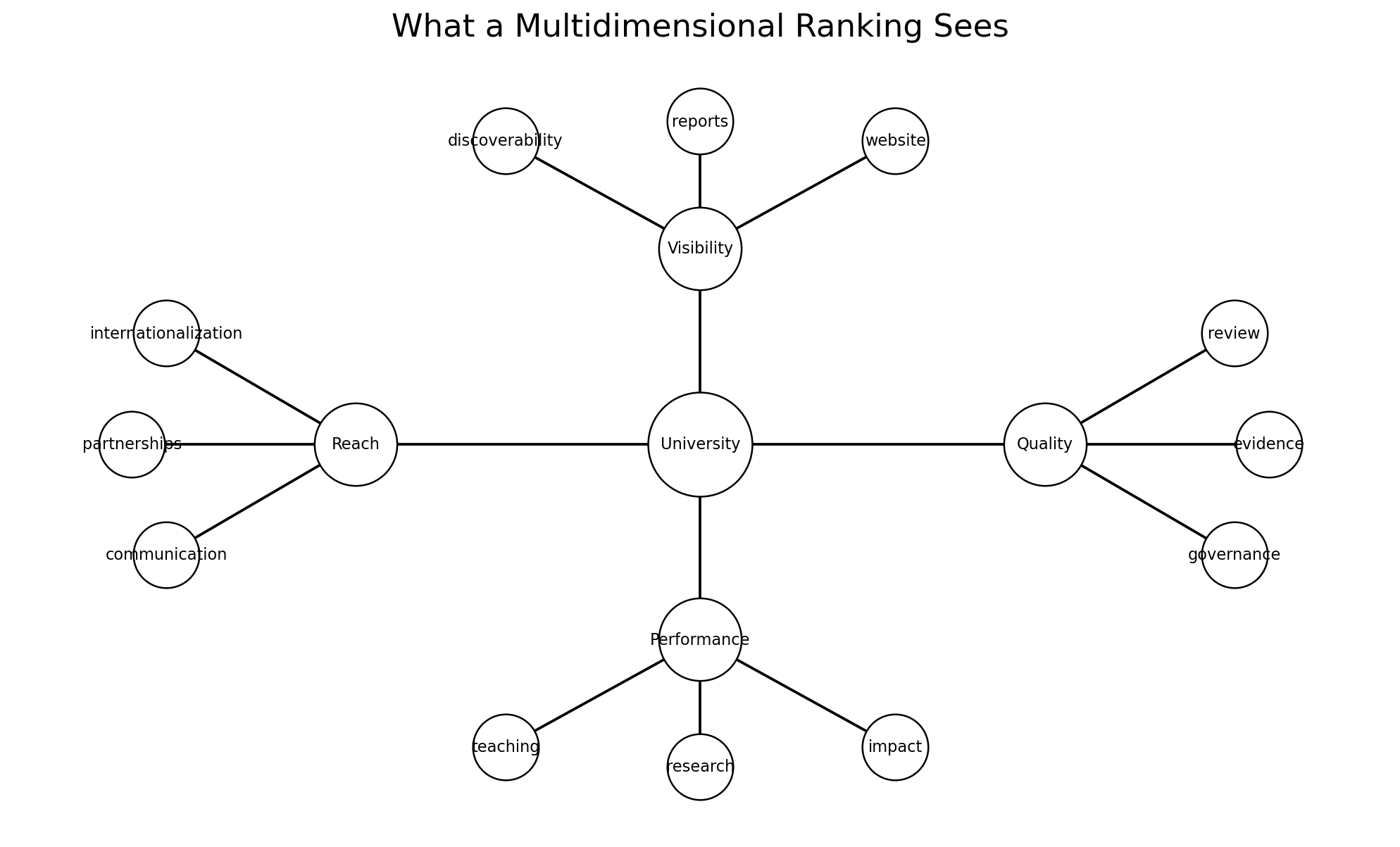

A multidimensional ranking asks a more realistic question: how well does a university perform as a university? That is a harder question than “how much research does it produce?” because it forces us to think institutionally rather than narrowly. It requires attention to leadership, structure, transparency, responsiveness, communication, documentation, and public presence. Those elements may not always sound glamorous, but they are central to institutional credibility. Universities rise not only because they publish; they rise because they function.

This distinction is especially important for institutions operating outside the world’s most research-intensive ecosystems. Many universities do meaningful work in teaching, professional formation, social engagement, regional service, international collaboration, and organizational development. Their strength may be distributed across a wider set of functions rather than concentrated in one research metric. If rankings cannot see that, they risk reproducing a distorted image of higher education reality. They risk rewarding only one institutional form as if it were the only one that counts.

That is why I see the 2026 results as a useful corrective. The table includes universities from very different contexts, some of which may never resemble the classic research megainstitutions that dominate certain global rankings. But that does not make them weak institutions. On the contrary, some are highly effective in building coherent academic identity, strong systems, visible evidence, and public credibility. A university that manages itself well, communicates clearly, documents rigorously, and serves its academic mission coherently deserves to be seen.

There is also a policy implication here. If national systems define excellence too narrowly, they will pressure all institutions to imitate one model even when that model does not fit their mission. The result is often wasted energy, artificial performance theater, and institutional confusion. A better approach is to recognize that universities can be strong in different ways, provided they remain rigorous, transparent, and strategically coherent. Multidimensional evaluation helps protect that principle.

For university leaders, this broader view is liberating, but only if they use it honestly. It does not mean institutions can ignore research or hide behind vague mission statements. It means they must strengthen the whole architecture of institutional performance. They must ask whether governance is working, whether evidence is organized, whether the public face of the university reflects its actual quality, and whether different institutional units are aligned around a coherent academic narrative. These are demanding questions. But they are the right ones.

What I appreciate in HE Higher Education Ranking 2026 is that it invites this more mature conversation. It suggests that universities should be assessed not only as producers of outputs, but as institutions with structure, mission, systems, and public responsibility. In an era when higher education faces pressure from every direction—financial, technological, political, reputational—that broader institutional lens is not optional. It is necessary.

The future of higher education will belong to universities that can perform across multiple dimensions, not because they are trying to please rankings, but because the world now demands that kind of institutional intelligence. Research remains central. But it is not the whole university. It never was.

This broader lens also protects a very important principle: mission diversity. Not every university exists to become the same kind of institution. Some are deeply rooted in regional service. Some specialize in professional education. Some are teaching-intensive. Some are fast-growing private institutions trying to build credibility quickly. Some balance research with applied impact and social reach. A ranking that can only recognize one form of excellence quietly punishes all the others. A multidimensional approach does not eliminate hierarchy, but it does create a fairer framework for recognizing difference.

There is a second reason this matters. Universities are increasingly judged by publics who care about more than publications. Students care about learning environments and employability. Families care about trust and stability. partners care about responsiveness and clarity. Regulators care about governance and documentation. A university that excels in research but fails in institutional coherence may still struggle in the real world. This is why the whole-institution perspective is not softer. It is more realistic.

For academic leaders, the implication is demanding but constructive. They must resist the temptation to chase one visible metric at the expense of institutional balance. Durable reputation is usually built when research, governance, communication, teaching quality, and public credibility reinforce rather than undermine one another. That is hard work, but it is also the only kind of excellence that tends to last.

#HigherEducation #HERanking #Research #UniversityLeadership #QualityAssurance #InstitutionalPerformance #HigherEdPolicy